This is part 2; check part one if you don’t want to get spoiled!

Let’s tackle five lessons learned from the past three years. We have seen an explosion of frameworks, tooling, and SaaS startups. Probably because bootstrapping SaaS products has never been easier.

2020 ⏱️ Remember bob, the data engineer?

Bob now has plenty of options in open-source. Plus, they aren’t ridiculously expensive to put in production. After all, many companies put Kafka into production before it was even 1.0!

✔️Lesson #6: Open-source is the new norm

Having a product (or some part of it) open-sourced enables tech people to try new technology at minimum risk without any commitment.

Tech folks don’t like to talk to salespeople. I prefer to try the product first and then return with any questions. And I’m happy to go one step further regarding sales if things get interesting.

On a side note, open-source is not needed in this case. Having an online demo without a credit card could solve this. Yes, but it’s still a black box. How mature is the project? How big is the number of contributors? What’s the community traction?

All these things can be evaluated easier when a project is open-source.

But here’s the trap: maintenance is not free.

While an open-source tool can be easy to try, there is sometimes a huge gap between a local playground and something put in production. We sometimes get fooled compared to expensive proprietary vendors, but we should never forget that a huge part of the cost, in the end, is our salary.

It’s ends-up with the same classic question of build vs. buy, as there’s always something to build when using an open-source product.

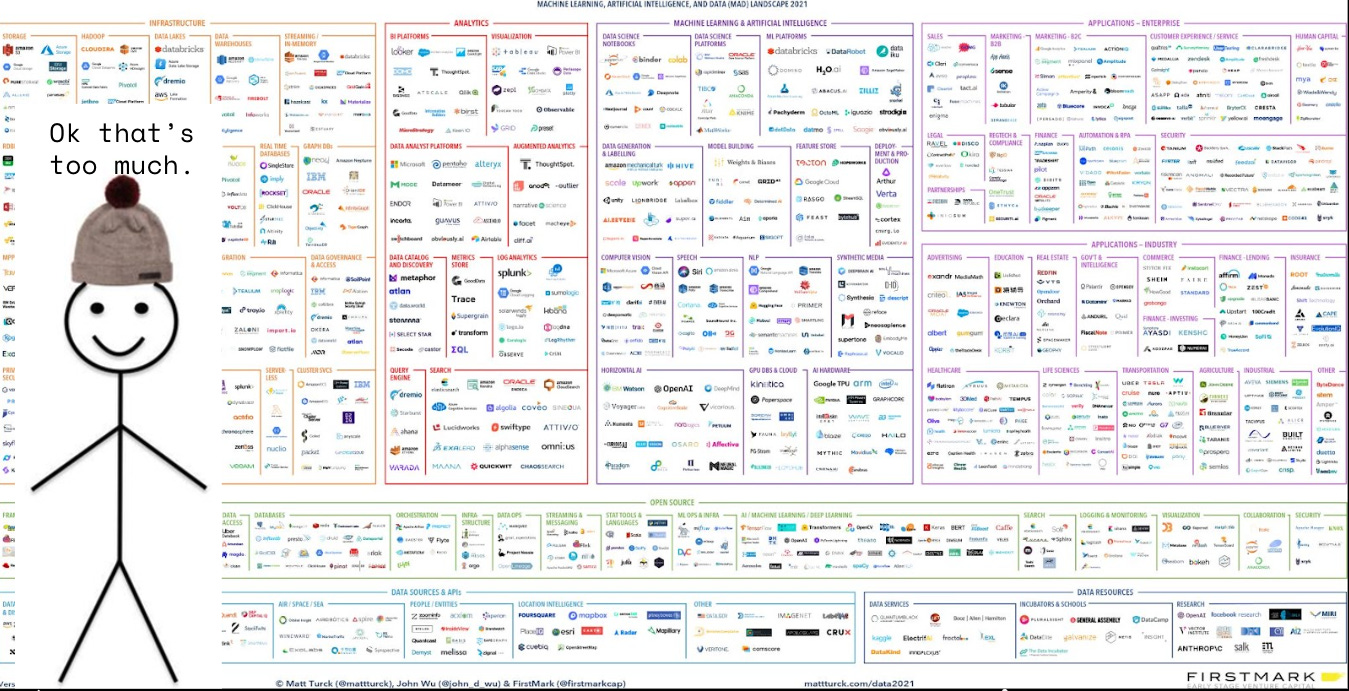

2021 ⏱️ The modern data stack mess

We are in an overdose of tooling era. If you are sometimes losing track of what’s happening, don’t worry; you are not alone. Even when doing my daily technology watch, I’m still amazed by all products I’ve never heard of in the above picture.

✔️Lesson #7: The best cloud provider is AWS GCP Azure

AWS had a headstart in the Cloud war, and it’s still a safe bet. But we have seen that each cloud provider somehow plays a different strategy.

Azure is great for existing Microsoft customers. There are a lot of migration paths, and contract-wise, well, it’s just an amendment to an existing contract. For old big corporate that required a ton of security and procurement processes to consider moving to the cloud, it’s a big win.

Google’s GCP has been focusing a lot on Machine Learning products which makes sense as it’s part of their core and initial product.

That being said, a lot of companies today have at least two cloud providers just for the sake of being able to negotiate better pricing.

Plus, with Kubernetes emerging as standard and getting easier to manage, I’ve often seen companies focusing primarily on these services to avoid too much vendor locking.

Aside from that, we have SaaS startups that are taking some part of the cake on niche services. They partner with cloud providers to offer the best in class experience while relying on the big cloud provider for server management. Databricks on Azure and Confluent’s Kafka on GCP are some examples.

✔️Lesson #8: The data stack needs consolidation

Having plenty of options is nice, but if we still need to figure out how the blocks are talking to each other, it may not be worth it.

One big plus we’ve seen these past years in the data world is the adoption of standard file formats. It mainly started with Parquet and now we have ACID file formats like Delta lake, Iceberg, and Hudi. A lot of cloud data warehouses have been pushing support on these.

Moving data just because we can’t use the data as it is was the most painful job to do, especially at scale. Glad we are finally getting away from this with more standards.

But no matter how many integrations we put in place, the hard truth is: we have too many tools. Some data vendors will just die. Or get acquired. Two weeks ago, Confluent (Kafka) announced the acquisition of Immerock (managed Flink). I’m sure most of us never actually heard of this startup.

2022 ⏱️ Python and SQL. Everywhere.

Some personal confession: I got a Python fatigue.

✔️Lesson #9: Data engineering/analytics is software engineering

As my career was mostly in data (BI, etc.) and not started as a classic software engineer, I always felt I had to catch up on some foundations like CI/CD, unit testing, and observability, just to name a few.

Many of these concepts have landed in data by now, but many people were (and some still are) not considering data as a software engineering asset.

Your metrics in your beautiful Tableau UI are software engineering assets that should be versioned, tested (in different environments), and included in a CI/CD pipeline.

✔️Lesson #10: Python & SQL is nice. Rust is efficient

Nope, it’s not a war of Python vs. Rust. It’s Python WITH Rust.

Python is here to stay, but Rust will impact (and it’s already happening) the data ecosystem at its core. It won’t probably impact the classic data users as they will still use Python binding for their data tasks.

This topic can be an entire blog, but if you want to know more, I’m putting one of my latest YouTube video below.

I’ll also do an online talk about this very topic on the 18th of January at the event organized by

, register here. It's free!⚔️ Brace yourself for 2023.

Here is the recap for part 2 :

✔️Lesson #6: Open-source is the new norm

✔️Lesson #7: The best cloud provider is AWS GCP Azure

✔️Lesson #8: The data stack needs consolidation

✔️Lesson #9: Data engineering/analytics is software engineering

✔️Lesson #10: Python & SQL is nice. Rust is efficient

The macroeconomic situation is playing in our favor. Only sustainable products will survive. That will clean up the ecosystem a bit.

Make sure you evaluate new shiny tools only when needed and double-check the maturity and viability of the product because who knows if it’s still going to be there in a year…

May the data be with you.